← Back to projects

Activation Function Ablation Study for 3D Point Cloud Classification

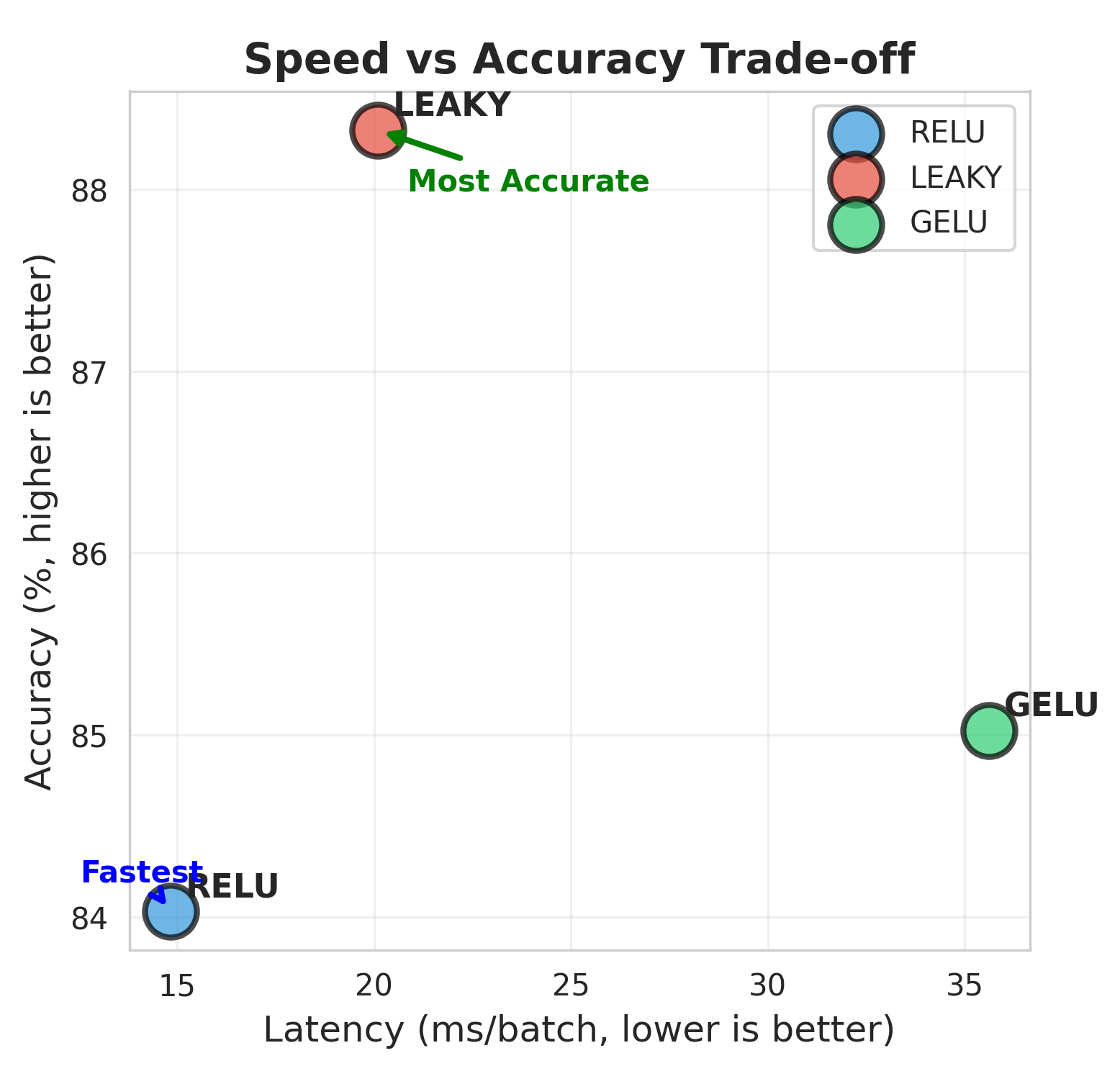

CompletedReLU vs Leaky ReLU vs GELU in a PointNet baseline — accuracy, stability, and latency under a fair, single-switch setup

activations deeplearning ablationstudy

2025 About this project

- Leaky ReLU and GELU tied for best accuracy (89.98%) on ModelNet10 in this setup.

- ReLU delivered the fastest inference (2.4× faster than GELU) and is the most deployment/INT8-friendly.

- GELU trained stably but was significantly slower on this backbone.

Gallery

speed_vs_accuracy